Performance¶

Environment¶

Cluster Information

| Instance | Nodes | CPU | Memory | Storage | Network | Description |

| Master | 3 | 32 | 32 GB | 120 GB SSD | 10 Gb/s | |

| MetaNode | 10 | 32 | 32 GB | 16 x 1TB SSD | 10 Gb/s | hybrid deployment |

| DataNode | 10 | 32 | 32 GB | 16 x 1TB SSD | 10 Gb/s | hybrid deployment |

Volume Setup

| Parameter | Default | Recommend | Description |

| FollowerRead | True | True | |

| Capacity | 10 GB | 300 000 000 GB | |

| Data Replica Number | 3 | 3 | |

| Meta Replica Number | 3 | 3 | |

| Data Partition Size | 120 GB | 120 GB | Logical upper limit with no pre-occupied space. |

| Data Partition Count | 10 | 1500 | |

| Meta Partition Count | 3 | 10 | |

| Cross Zone | False | False |

Set volume parameters by following:

$ cfs-cli volume create test-vol {owner} --capacity=300000000 --mp-count=10

Create a new volume:

Name : test-vol

Owner : ltptest

Dara partition size : 120 GB

Meta partition count: 10

Capacity : 300000000 GB

Replicas : 3

Allow follower read : Enabled

Confirm (yes/no)[yes]: yes

Create volume success.

$ cfs-cli volume add-dp test-vol 1490

client configuration

| Parameter | Default | Recommend |

| rate limit | -1 | -1 |

#get current iops, default:-1(no limits on iops):

$ http://[ClientIP]:[ProfPort]/rate/get

#set iops

$ http://[ClientIP]:[ProfPort]/rate/set?write=800&read=800

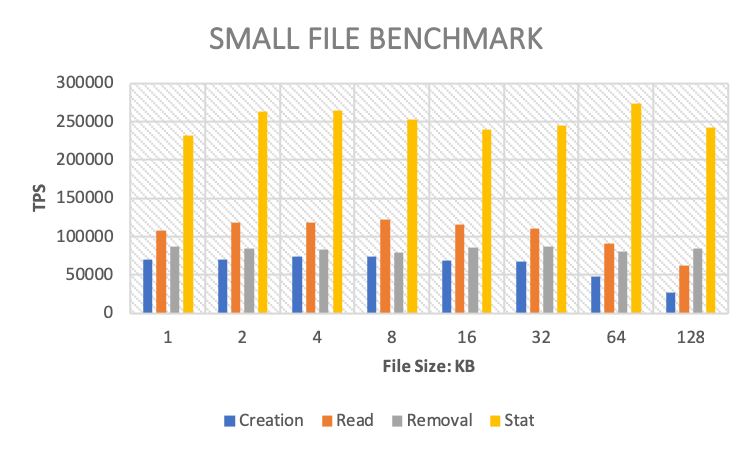

Small File Performance and Scalability¶

Small file operation performance and scalability benchmark test by mdtest.

Setup

#!/bin/bash

set -e

TARGET_PATH="/mnt/test/mdtest" # mount point of ChubaoFS volume

for FILE_SIZE in 1024 2048 4096 8192 16384 32768 65536 131072 # file size

do

mpirun --allow-run-as-root -np 512 --hostfile hfile64 mdtest -n 1000 -w $i -e $FILE_SIZE -y -u -i 3 -N 1 -F -R -d $TARGET_PATH;

done

Benchmark

| File Size (KB) | 1 | 2 | 4 | 8 | 16 | 32 | 64 | 128 |

| Creation (TPS) | 70383 | 70383 | 73738 | 74617 | 69479 | 67435 | 47540 | 27147 |

| Read (TPS) | 108600 | 118193 | 118346 | 122975 | 116374 | 110795 | 90462 | 62082 |

| Removal (TPS) | 87648 | 84651 | 83532 | 79279 | 85498 | 86523 | 80946 | 84441 |

| Stat (TPS) | 231961 | 263270 | 264207 | 252309 | 240244 | 244906 | 273576 | 242930 |

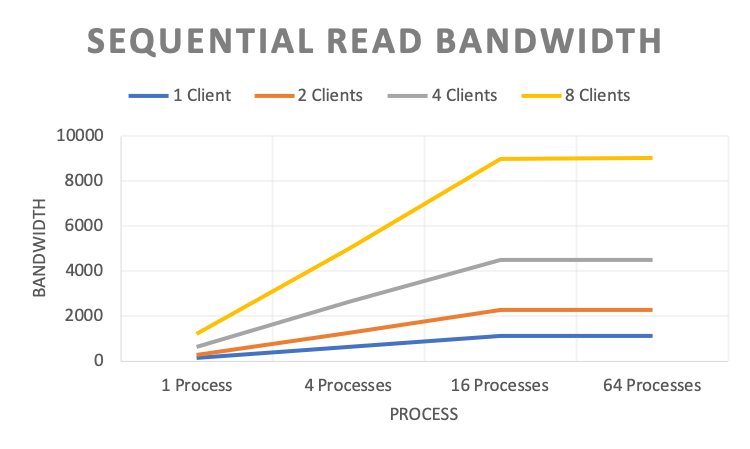

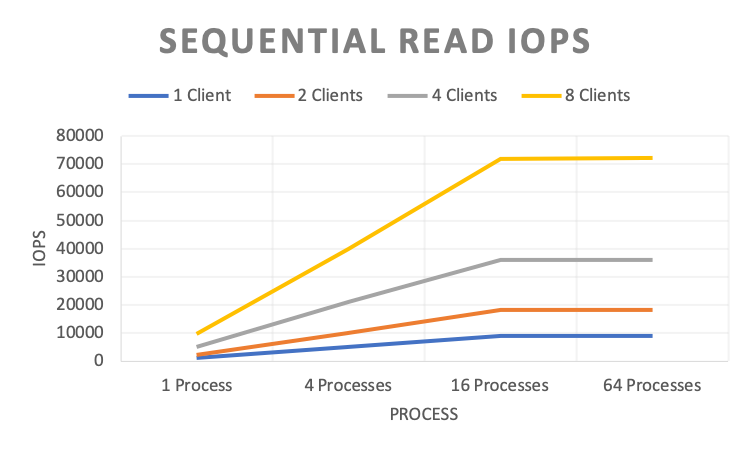

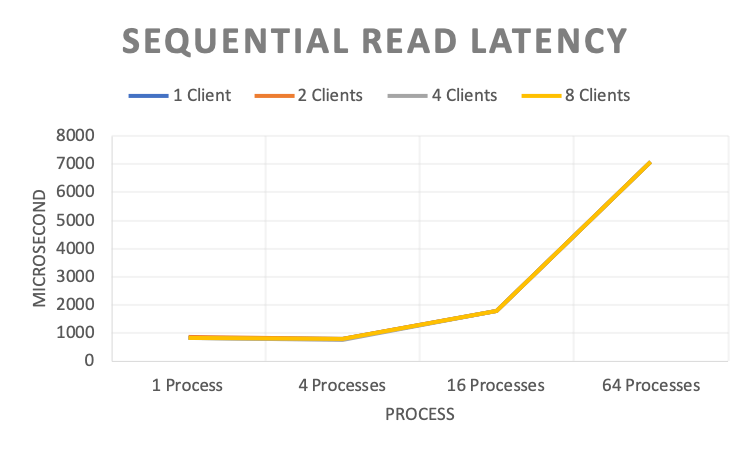

IO Performance and Scalability¶

IO Performance and benchmark scalability test by fio.

Note: Multiple clients mount the same volume. And the process refers to the fio process.

1. Sequential Read¶

Setup

#!/bin/bash

fio -directory={} \

-ioengine=psync \

-rw=read \ # sequential read

-bs=128k \ # block size

-direct=1 \ # enable direct IO

-group_reporting=1 \

-fallocate=none \

-time_based=1 \

-runtime=120 \

-name=test_file_c{} \

-numjobs={} \

-nrfiles=1 \

-size=10G

Bandwidth(MB/s)

| 1 Process | 4 Processes | 16 Processes | 64 Processes | |

| 1 Client | 148.000 | 626.000 | 1129.000 | 1130.000 |

| 2 Clients | 284.000 | 1241.000 | 2258.000 | 2260.000 |

| 4 Clients | 619.000 | 2640.000 | 4517.000 | 4515.000 |

| 8 Clients | 1193.000 | 4994.000 | 9006.000 | 9034.000 |

IOPS

| 1 Process | 4 Processes | 16 Processes | 64 Processes | |

| 1 Client | 1180.000 | 5007.000 | 9031.000 | 9040.000 |

| 2 Clients | 2275.000 | 9924.000 | 18062.000 | 18081.000 |

| 4 Clients | 4954.000 | 21117.000 | 36129.000 | 36112.000 |

| 8 Clients | 9531.000 | 39954.000 | 72048.000 | 72264.000 |

Latency(Microsecond)

| 1 Process | 4 Processes | 16 Processes | 64 Processes | |

| 1 Client | 842.200 | 794.340 | 1767.310 | 7074.550 |

| 2 Clients | 874.255 | 801.690 | 1767.370 | 7071.715 |

| 4 Clients | 812.363 | 760.702 | 1767.710 | 7077.065 |

| 8 Clients | 837.707 | 799.851 | 1772.620 | 7076.967 |

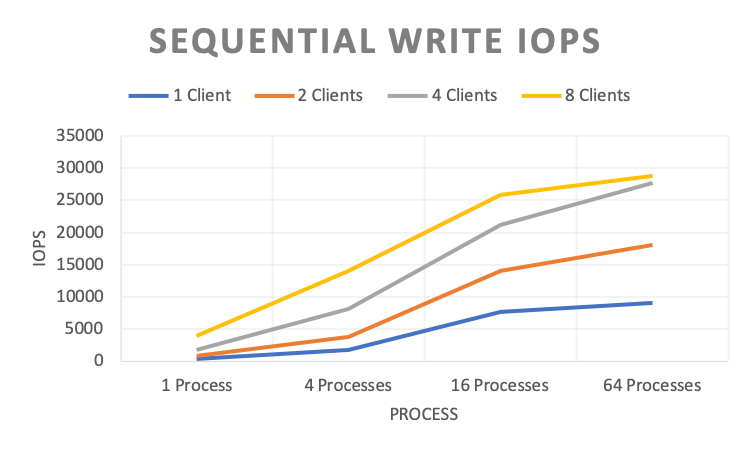

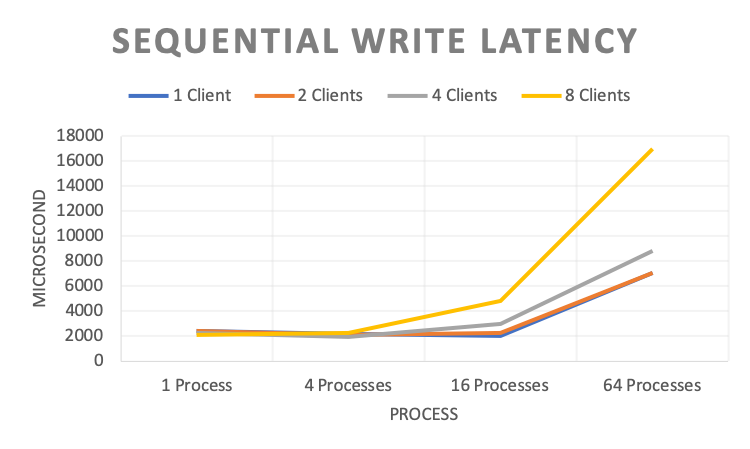

2. Sequential Write¶

Setup

#!/bin/bash

fio -directory={} \

-ioengine=psync \

-rw=write \ # sequential write

-bs=128k \ # block size

-direct=1 \ # enable direct IO

-group_reporting=1 \

-fallocate=none \

-name=test_file_c{} \

-numjobs={} \

-nrfiles=1 \

-size=10G

Bandwidth(MB/s)

| 1 Process | 4 Processes | 16 Processes | 64 Processes | |

| 1 Client | 52.200 | 226.000 | 956.000 | 1126.000 |

| 2 Clients | 104.500 | 473.000 | 1763.000 | 2252.000 |

| 4 Clients | 225.300 | 1015.000 | 2652.000 | 3472.000 |

| 8 Clients | 480.600 | 1753.000 | 3235.000 | 3608.000 |

IOPS

| 1 Process | 4 Processes | 16 Processes | 64 Processes | |

| 1 Client | 417 | 1805 | 7651 | 9004 |

| 2 Clients | 835 | 3779 | 14103 | 18014 |

| 4 Clients | 1801 | 8127 | 21216 | 27777 |

| 8 Clients | 3841 | 14016 | 25890 | 28860 |

Latency(Microsecond)

| 1 Process | 4 Processes | 16 Processes | 64 Processes | |

| 1 Client | 2385.400 | 2190.210 | 2052.360 | 7081.320 |

| 2 Clients | 2383.610 | 2081.850 | 2233.790 | 7079.450 |

| 4 Clients | 2216.305 | 1947.688 | 2946.017 | 8842.903 |

| 8 Clients | 2073.921 | 2256.120 | 4787.496 | 17002.425 |

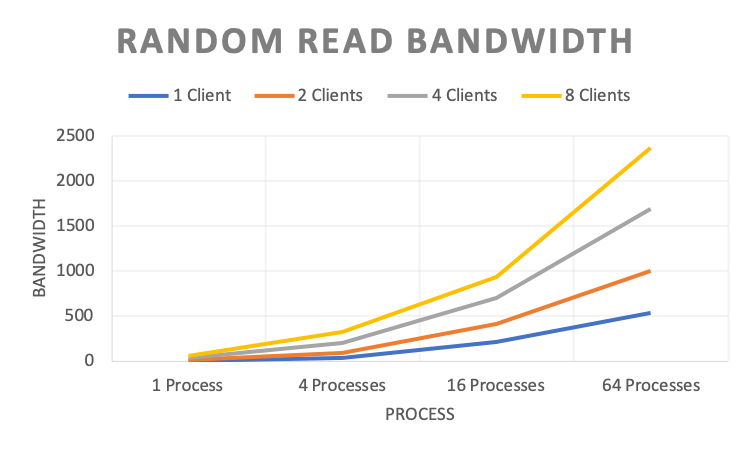

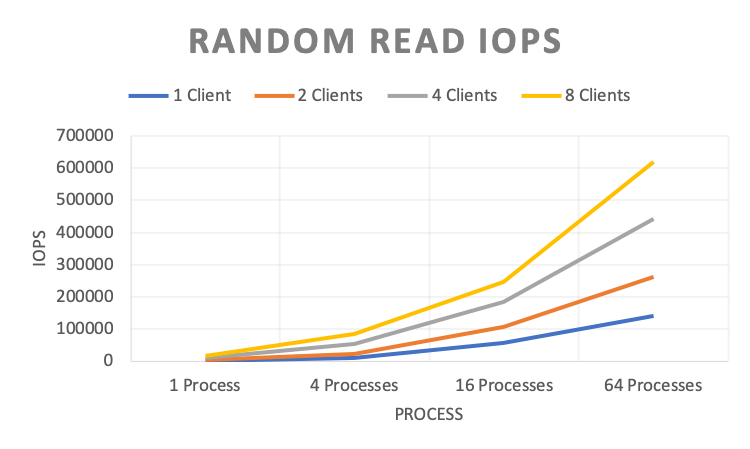

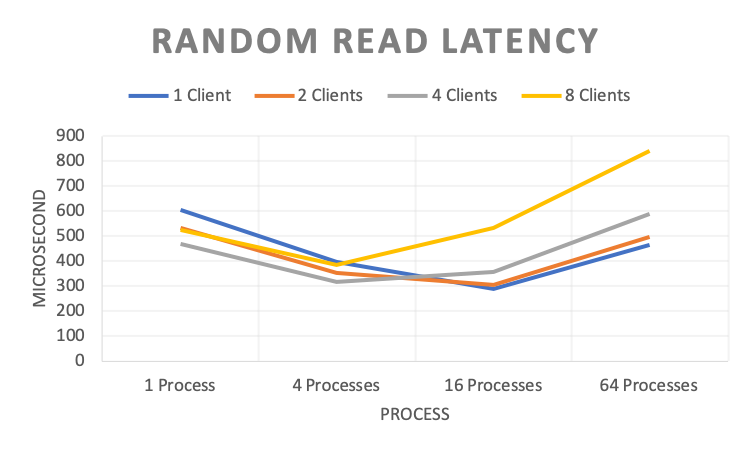

3. Random Read¶

Setup

#!/bin/bash

fio -directory={} \

-ioengine=psync \

-rw=randread \ # random read

-bs=4k \ # block size

-direct=1 \ # enable direct IO

-group_reporting=1 \

-fallocate=none \

-time_based=1 \

-runtime=120 \

-name=test_file_c{} \

-numjobs={} \

-nrfiles=1 \

-size=10G

Bandwidth(MB/s)

| 1 Process | 4 Processes | 16 Processes | 64 Processes | |

| 1 Client | 6.412 | 39.100 | 216.000 | 534.000 |

| 2 Clients | 14.525 | 88.100 | 409.000 | 1002.000 |

| 4 Clients | 33.242 | 200.200 | 705.000 | 1693.000 |

| 8 Clients | 59.480 | 328.300 | 940.000 | 2369.000 |

IOPS

| 1 Process | 4 Processes | 16 Processes | 64 Processes | |

| 1 Client | 1641 | 10240 | 56524.800 | 140288 |

| 2 Clients | 3718 | 23142.4 | 107212.8 | 263168 |

| 4 Clients | 8508 | 52428.8 | 184627.2 | 443392 |

| 8 Clients | 15222 | 85072.8 | 246681.6 | 621056 |

Latency(Microsecond)

| 1 Process | 4 Processes | 16 Processes | 64 Processes | |

| 1 Client | 603.580 | 395.420 | 287.510 | 466.320 |

| 2 Clients | 532.840 | 351.815 | 303.460 | 497.100 |

| 4 Clients | 469.025 | 317.140 | 355.105 | 588.847 |

| 8 Clients | 524.709 | 382.862 | 530.811 | 841.985 |

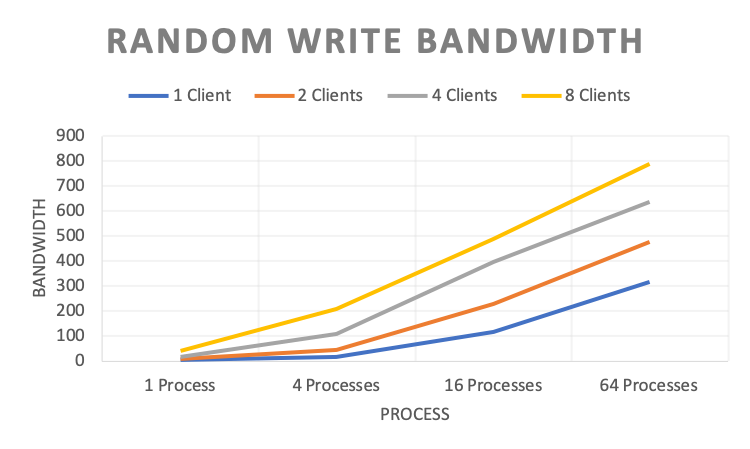

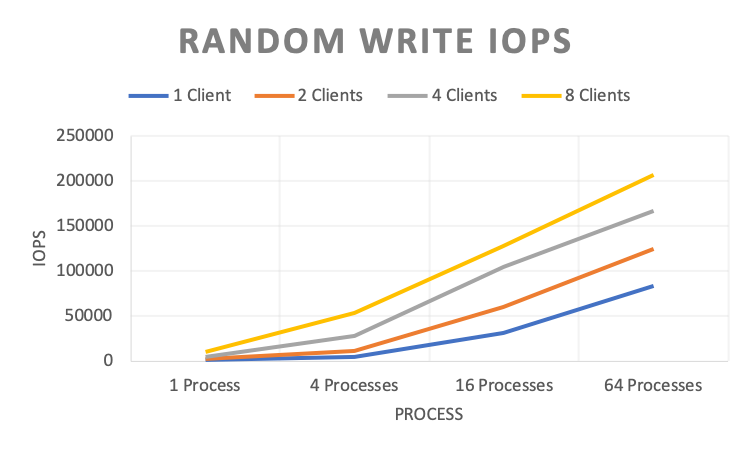

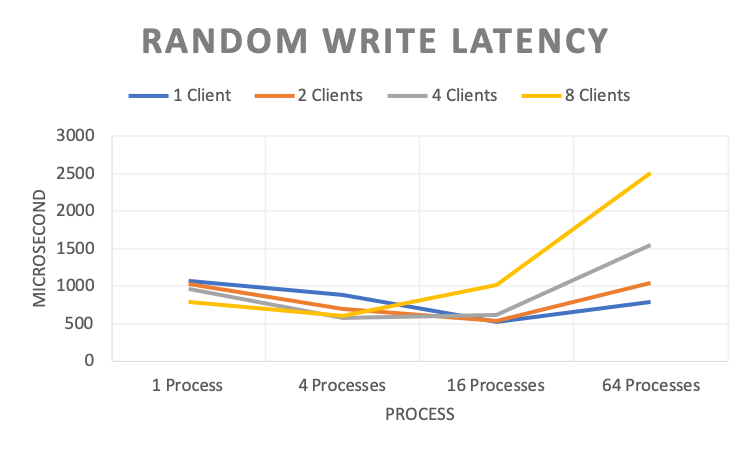

4. Random Write¶

Setup

#!/bin/bash

fio -directory={} \

-ioengine=psync \

-rw=randwrite \ # random write

-bs=4k \ # block size

-direct=1 \ # enable direct IO

-group_reporting=1 \

-fallocate=none \

-time_based=1 \

-runtime=120 \

-name=test_file_c{} \

-numjobs={} \

-nrfiles=1 \

-size=10G

Bandwidth(MB/s)

| 1 Process | 4 Processes | 16 Processes | 64 Processes | |

| 1 Client | 3.620 | 17.500 | 118.000 | 318.000 |

| 2 Clients | 7.540 | 44.800 | 230.000 | 476.000 |

| 4 Clients | 16.245 | 107.700 | 397.900 | 636.000 |

| 8 Clients | 39.274 | 208.100 | 487.100 | 787.100 |

IOPS

| 1 Process | 4 Processes | 16 Processes | 64 Processes | |

| 1 Client | 926.000 | 4476.000 | 31027.200 | 83251.200 |

| 2 Clients | 1929.000 | 11473.000 | 60313.600 | 124620.800 |

| 4 Clients | 4156.000 | 27800.000 | 104243.200 | 167014.400 |

| 8 Clients | 10050.000 | 53250.000 | 127692.800 | 206745.600 |

Latency

| 1 Process | 4 Processes | 16 Processes | 64 Processes | |

| 1 Client | 1073.150 | 887.570 | 523.820 | 784.030 |

| 2 Clients | 1030.010 | 691.530 | 539.525 | 1042.685 |

| 4 Clients | 955.972 | 575.183 | 618.445 | 1552.205 |

| 8 Clients | 789.883 | 598.393 | 1016.185 | 2506.424 |

Metadata Performance and Scalability¶

Metadata performance and scalability benchmark test by mdtest.

Setup

#!/bin/bash

TEST_PATH=/mnt/cfs/mdtest # mount point of ChubaoFS volume

for CLIENTS in 1 2 4 8 # number of clients

do

mpirun --allow-run-as-root -np $CLIENTS --hostfile hfile01 mdtest -n 5000 -u -z 2 -i 3 -d $TEST_PATH;

done

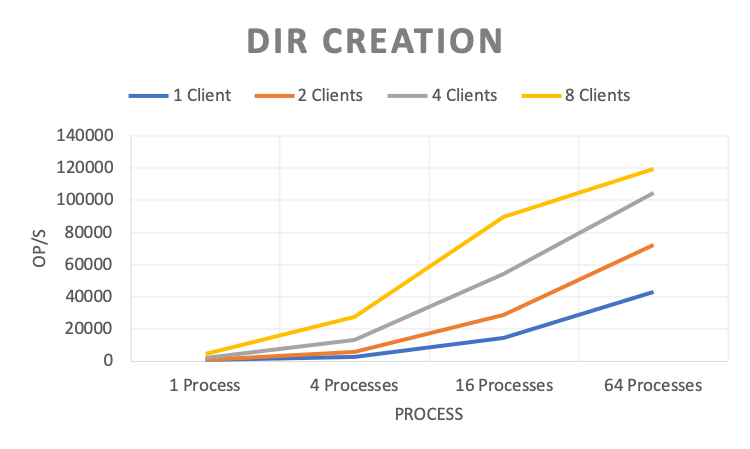

Dir Creation

| 1 Process | 4 Processes | 16 Processes | 64 Processes | |

| 1 Client | 448.618 | 2421.001 | 14597.97 | 43055.15 |

| 2 Clients | 956.947 | 5917.576 | 28930.431 | 72388.765 |

| 4 Clients | 2027.02 | 13213.403 | 54449.056 | 104771.356 |

| 8 Clients | 4643.755 | 27416.904 | 89641.301 | 119542.62 |

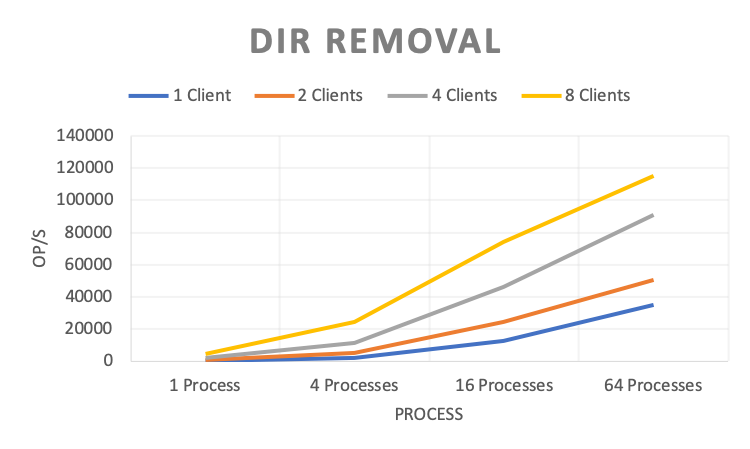

Dir Removal

| 1 Process | 4 Processes | 16 Processes | 64 Processes | |

| 1 Client | 399.779 | 2118.005 | 12351.635 | 34903.672 |

| 2 Clients | 833.353 | 5176.812 | 24471.674 | 50242.973 |

| 4 Clients | 1853.617 | 11462.927 | 46413.313 | 91128.059 |

| 8 Clients | 4441.435 | 24133.617 | 74401.336 | 115013.557 |

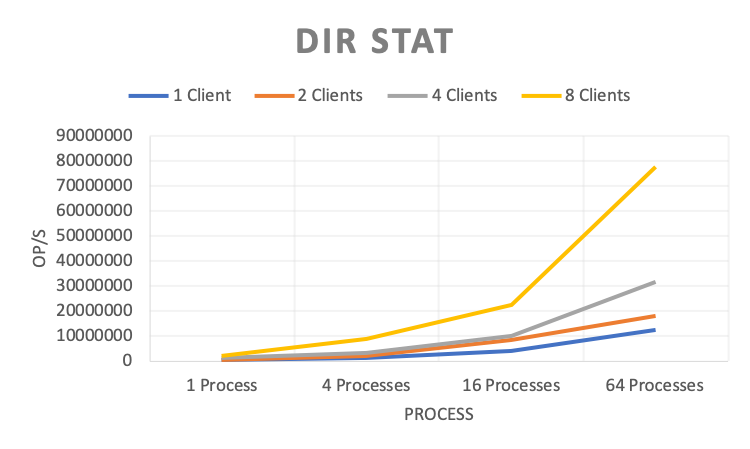

Dir Stat

| 1 Process | 4 Processes | 16 Processes | 64 Processes | |

| 1 Client | 283232.761 | 1215309.524 | 4231088.104 | 12579177.02 |

| 2 Clients | 572834.143 | 2169669.058 | 8362749.217 | 18120970.71 |

| 4 Clients | 1263474.549 | 3333746.786 | 10160929.29 | 31874265.88 |

| 8 Clients | 2258670.069 | 8715752.83 | 22524794.98 | 77533648.04 |

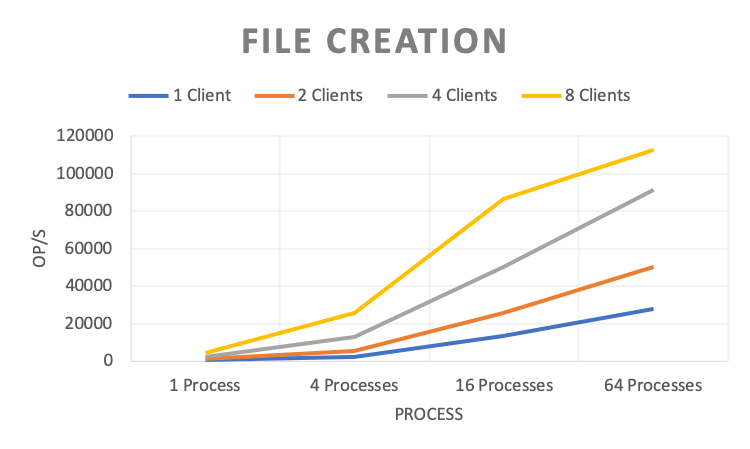

File Creation

| 1 Process | 4 Processes | 16 Processes | 64 Processes | |

| 1 Client | 448.888 | 2400.803 | 13638.072 | 27785.947 |

| 2 Clients | 925.68 | 5664.166 | 25889.163 | 50434.484 |

| 4 Clients | 2001.137 | 12986.968 | 50330.952 | 91387.825 |

| 8 Clients | 4479.831 | 25933.437 | 86667.966 | 112746.199 |

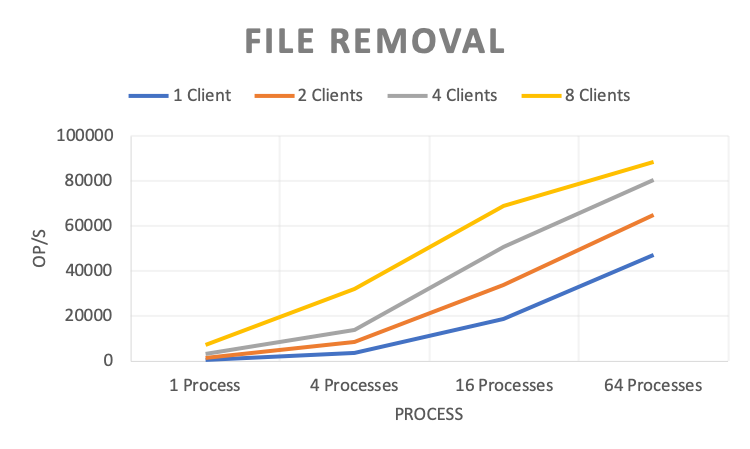

File Removal

| 1 Process | 4 Processes | 16 Processes | 64 Processes | |

| 1 Client | 605.143 | 3678.138 | 18631.342 | 47035.912 |

| 2 Clients | 1301.151 | 8365.667 | 34005.25 | 64860.041 |

| 4 Clients | 3032.683 | 14017.426 | 50938.926 | 80692.761 |

| 8 Clients | 7170.386 | 32056.959 | 68761.908 | 88357.563 |

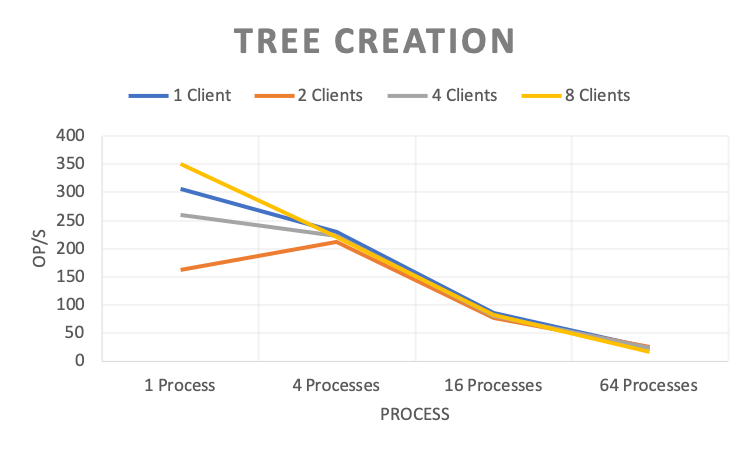

Tree Creation

| 1 Process | 4 Processes | 16 Processes | 64 Processes | |

| 1 Client | 305.778 | 229.562 | 86.299 | 23.917 |

| 2 Clients | 161.31 | 211.119 | 76.301 | 24.439 |

| 4 Clients | 260.562 | 223.153 | 81.209 | 23.867 |

| 8 Clients | 350.038 | 220.744 | 81.621 | 17.144 |

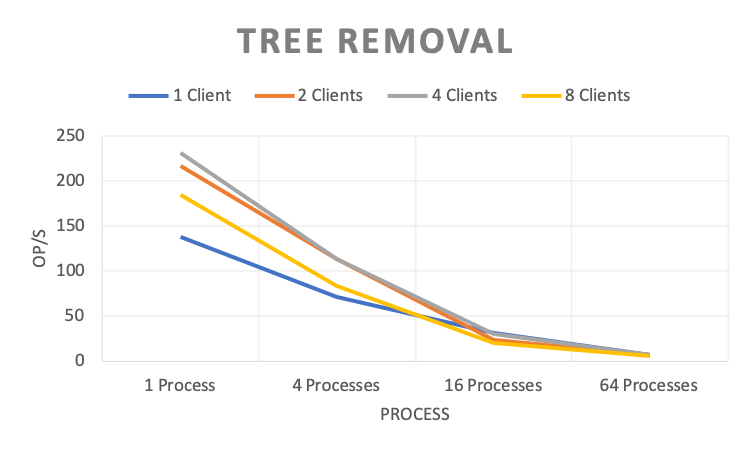

Tree Removal

| 1 Process | 4 Processes | 16 Processes | 64 Processes | |

| 1 Client | 137.462 | 70.881 | 31.235 | 7.057 |

| 2 Clients | 217.026 | 113.36 | 23.971 | 7.128 |

| 4 Clients | 231.622 | 113.539 | 30.626 | 7.299 |

| 8 Clients | 185.156 | 83.923 | 20.607 | 5.515 |

Integrity¶

- Linux Test Project / fs

Workload¶

Database backup

Java application logs

Code git repo

Database systems

MyRocks, MySQL Innodb, HBase,

Scalability¶

- Volume Scalability: tens to millions of cfs volumes

- Metadata Scalability: a big volume with billions of files/directories